| SDI | |

SDI Framework

– Design and decide – Designs are assemblages of elements. So we need to be able to record these things (and relate to externalities e.g. technologies). Design and decision making is made based on analysis of alternatives. So we have the information on drivers and requirements and are able to undertake analysis we can make explain the basis of decisions and designs;

– Plan, Programme & Phase – these require us to understand sequencing, prerequisites and co-requisites. Intrinsic is the relationship between the requirements and the designs;

– Promulgate, educate, communicate and socialise – we need to be able to very selectively extract information for the framework that is suited to a particular audience, purpose or interest. We don’t then need to manually reconstruct communication artefacts for each different purpose;

– Estimate the effort, costs, risk and timeframes associated with people, technology, procedures – in practice costs can only be effectively estimated by examining the proposed implementation i.e. the designs. But decisions need to be made related to the requirements and outcomes therefore we need to understand how the elements of the implementation relate to the requirements (and the marginal economic impact of each requirement);

– Support the validation, assessment, quality assurance and review – by making the above relationships explicit and transparent we provide a mechanism for doing this.

1.3 What is best practice (what can we learn from)

We can learn from a number of standards and approaches that are applied elsewhere by examining some existing methods e.g. ValIT (Val IT – is framework addressing the governance of IT-enabled business investments), COBIT (Control Objectives for Information and related Technology), OSIMM (Open group SOA Integration Maturity Model), CMMI (Capability Maturity Model Integration), FEAF (Federal Enterprise Architecture Framework), DODAF (Department of Defense Architecture Framework), TOGAF (The Open Group Architectural Framework), Zachman Framework , ITIL (Information Technology Infrastructure Library), IFW (Information FrameWork), DSM (Design Structure Matrix), Pattern Language (i.e. Alexander’s seminal work). These are from many disciplines e.g. engineering, architecture, portfolio analysis, defense, IT, etc.

Our approach to an SDI framework is informed by these sources and others. We can also see that a number originated in Government (and have subsequently been adopted in the private sector). The effectiveness of these approaches has in the past significantly impacted in most cases by their means of implementation (usually many documents and consultants). We need an approach that minimises the need for both.

Space does not permit a full review of all of these but we believe that there is general consensus that following seem to make good sense:

– Business case and investment models- FEAF, ValIT

– Reference Models- FEAF, OSIMM

– Patterns – DODAF, Pattern Language, TOGAF

– Principles – Pattern Language, TOGAF,

– Standards – TOGAF, FEAF, ITIL, OSIMM

– Taxonomies – DODAF, Zachman,

– Maturity models – CMMI, OSIMM, TOGAF (implied)

– Compliance mechanism – Cobit, FEAF, OSIMM. CMMI

– Different levels (of detail, of technicality) – Zachman, Pattern Language

– Instantiation – almost all of these frameworks allow instantiated instances

1.4 Additional organisational and process challenges

Government is usually implemented via a set of federated agencies – global, regional, federal, state and local. A Model for data sharing through Government agreement is the one negotiated between the Victorian Government and local government (LG). [Gruen]

With an NSDI we are also able to deal with evolving roles of both public and private sector organisations, and both national and international players e.g. range from Google to United Nations.

In addition to the normal challenges we are usually dealing with federated and distributed organisations. That is to say that we have a network of organisations with different responsibilities goals and agenda and we need to under the basis on trade-offs are made. The Netherlands Gideon project offers excellent direction.[Gideon]

Further there is increasingly there is a demand for open government: citizen-centric services (giving people access to their data about their land); open and transparent government (being able to say what is known about land); innovation facilitation (facilitating innovation by all parties on knowledge about the NSDI) Reference the ‘The three pillars of open government stated by the Australian Federal Government’ [Senator Lundy]

– Citizen-centric services

– Open and transparent government

– Innovation facilitation

We also need to deal with archival and reference requirements; and transaction and functional requirements. This has a number of implications including that we need to use information engineering oriented techniques and process oriented techniques for understanding things.

In the ideal world the vast majority of data in an NSDI system is accretes as a natural by product of transactional activities i.e. few additional costs (non-transaction related costs) need to be incurred. In a similar fashion that data that populates an NSDI frameworks need to accrete as a by product of the work on NSDIs that is undertaken. In both cases frameworks and taxonomies are required to make this possible and small adjustments to day to day processes are required to enable to occur.

2. SDI framework based on multidiscipline best practice

2.1 What analysis is enabled by semantic precision of the framework

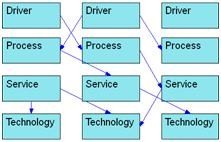

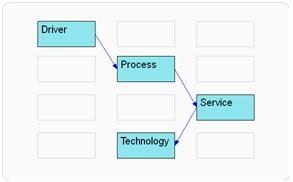

There are two types of analysis that we want to be able to do. We call them referential and inferential.

Referential analysis allows us to confirm that the relationships between element are correct this allows us to follow a path of relationships i.e. if this skill is unavailable what is affected, if this goal is to be achieved what is required, what elements are affected by this projects.

When we look at this:

It allows us to quickly see:

Inferential analysis is useful when we have compositions or when we have reference models – where we can relate our implementation to the reference models. It allows us to infer what relationships should exist i.e. are implied to exist but do not. It allows us a check on correctness.

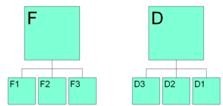

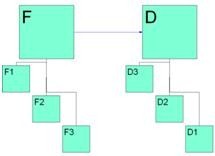

Reference models would usually be instantiated e.g. for example a functional (F) reference models indicates a function is performed, our instantiation would indicate how we perform it. A data (D) reference model indicates the data we need and our instantiation would indicate how we manage it exactly.

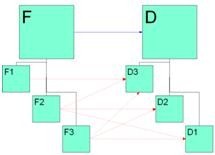

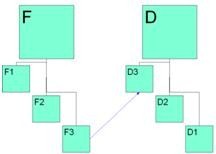

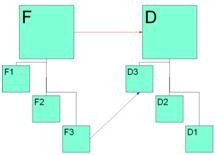

Let us say F >> F1, F2, F3 (i.e. F decomposes into F1, F2, F3) and D >> D1, D2 (i.e. D decomposes into D1, D2).

If D relates to F

We can tell that one or more of F1, F2, F3 must relate to one or more of D1, D2 i.e. in the follow diagram one of the red relationships must exist.

We can also tell that if F3 relates to D3

We should expect to see F relating to D.

While this seems obvious in this example when the relationships above are all described textually e.g. in a document inconsistencies are not so easy to see.

Even when they are dealt with graphically when there are large amounts of information (or multiple level of decomposition) these inconsistencies are hard to see. In both cases best practice would be to have systems do these checks (rather than checking for them manually).

To be concluded in March 11

My Coordinates |

EDITORIAL |

|

News |

INDUSTRY | LBS | GPS | GIS | REMOTE SENSING | GALILEO UPDATE |

|

Mark your calendar |

MARCH 2011 TO NOVEMBER 2011 |

Pages: 1 2

(33 votes, average: 2.27 out of 5)

(33 votes, average: 2.27 out of 5)

Leave your response!