| GNSS, TIME | |

Synchronet – an innovative system

Franco Gottifredi, Monica Gotta, Enrico Varriale, Daniele Cretoni

|

||||

| Inter-nodes data exchange is guarded by Networking Module which applies a second, packet level, encryption and crypto signature to the outbound data before routing information through the SynchroNet VPN.

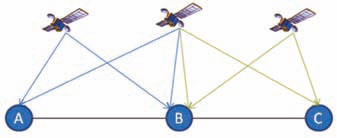

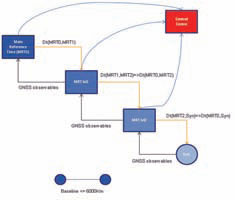

Additionally, each node provides synchronization interfaces by exporting synchronization products in many different ways, for example: These products are exposed through standard interfaces (i.e. standard analog frequency and PPS signals, filesystem objects or TCP/IP based connections); this approach is consistent with the modular nature of the system and allows SynchroNet to be regarded as an higher level service providing black box, entirely defined by its interfaces. This design allows the easiest integration of SynchroNet in pre-existent infrastructures and permits effective maintenance cycles. Figure 6: LCVTT technique Synchronet Distributed SynchronizationThe choice to design a distributed commonview based synchronization In particular one of the main design goals was to have a system that could be able to scale to an arbitrary service coverage area without requiring recurrent upgrades due to the increased load of servers and network equipment and that could cope with some limitations of the CommonView and Linked CommonView synchronization techniques. By running both short term and long term performance analysis of Common View algorithms exploiting the IGS (International GNSS Service) network was found that a minimum optimal number of satellites in common view is five. Again processing GPS almanac data and cross correlating with IGS stations network was found that a direct For longer baselines the LCVTT (Linked Common View Time Transfer) approach should be applied. A generalization of the LCVTT technique is represented in Figure 6 Synchronization between station A and C is carried out by computing ΔT(A,C) = ΔT(A,B) – ΔT(B,C) Figure 7: SynchroNet distributed synchronization process The first improvement given by wrapping synchronization into a dedicated homogenous network is the ability to distribute the computational process. Each node (which is guaranteed to be, at nominal conditions, not farther than 6000Km from its direct reference station) sends collected GNSS data to its reference node (MRT) and receives back synchronization products (clock model, synchronization noise, synchronization propagation errors, stability statistics and a summarizing synchronization integrity indicator). The synchronization loop is depicted in Figure 7 for a three level depth network. Each MRT is in charge of computing the synchronization and the detailed monitoring parameters only of lower level SyNs and MRTs; furthermore a status report is sent to the Control Centre. This architecture allows a higher level of customization both of the network topology and of the synchronization process. Network, by mean of data meta-routing (implemented at the Data server module) which allows information being routed to multiple destination, may have, for example, multiple Control Centers (a Control hierarchy could be implemented to face network growth just as pagingand indirect indexing methods works for computers operating systems). This flexibility allows a different approach to Multi path Linked CommonView that can be realized by sending observables data to different, higher level MRTs. The CC defines, when nodes join the network, the path set for MPLCVTT by knowing the detailed status of each node already in the network and their performances; paths can be recomputed when events happen: nodes moved, added, removed, status change (synchronization performances, link, observables quality, …). Figure 8: SynchroNet runtime fault recovery SynchroNet, not only provides a configurable and customizable synchronization network but allows a runtime automated or semi-automated network reconfiguration capability. In particular SynchroNet can take automatic actions in case an MRT goes down by reallocating the whole branch under the failing node. An example is given in Figure 8. All the configuration steps are carried out by the main Control Centre which is, in general, the only authority able to modify the network topology (add, move, remove and fully configure a node). In particular each node is connected to the Control Center by a dedicated VPN which is separated by the network used for data exchange between other network nodes. |

||||

(No Ratings Yet)

(No Ratings Yet)

Leave your response!