| Navigation | |

Maritime augmented reality

The ship bridge has evolved during the past years with the introduction of Integrated Navigation Systems (INS). This article presents research conducted on Maritime Augmented Reality (M-AR) |

|

|

|

|

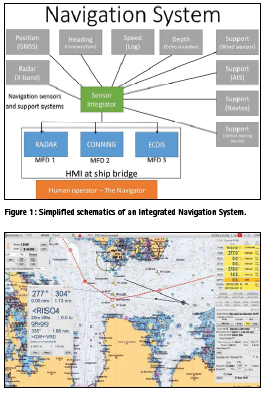

The maritime industry has been through a paradigm shift with the introduction of electronic navigation. The move from an analogue bridge with stand-alone navigation sensors and paper charts, has resulted in a modern bridge with Integrated Navigation Systems (INS) supported by Electronic Navigational Charts (ENCs). The navigation sensors on board is now networked and integrated, facilitating integrated information presentation on Multi-Function Displays (MFDs) as shown in Figure 1.

The sensors and systems within an INS include, but are not limited to (1):

1) The Electronic Position Fixing System (EPFS), with the use of Global Navigation Satellite Systems (GNSS), providing the absolute position of the vessel (for example Global Positioning System (GPS)).

2) Heading Control System (HCS), providing the heading of the vessel.

3) Speed and Distance Measurement Equipment (SDME), providing the speed of the vessel (and thus distance).

4) Echo sounding system (ESS), providing the depth measurements for the vessel.

5) Navigation support sensors

▪ Wind sensors providing wind speed and bearing

▪ Optical Bearing Device (OBD) providing position lines.

▪ Automatic Identification System (AIS), automatic tracking system used on ships and by vessel traffic services (VTS).

6) Use of Communication channels such as Global Maritime Distress Safety System (GMDSS), which uses for example the NAVTEX to receive navigational messages, or other communication channels for distributing data such as satellite communication (SATCOM) or mobile broadband.

7) Electronic Chart Display and Information System (ECDIS), used for chart presentation and presentation of relevant information for the navigator.

8) RADAR system, used as a mean for terrestrial positioning.

9) Conning application providing information about the engine and manoeuvring status.

The information is distributed on Local Area Networks (LAN) and presentation of information on MFDs. The aim of the integration of information presentation to the navigator has been to improve the Situation Awareness (SA) for the navigator.

Even though the number of navigation aids have increased in the last decade, the craftsmanship of navigation stays the same. The words of Nathanial Bowditch in the book “The American Practical Navigator” is best suited to explain this: “Marine navigation blends both science and art. A good navigator constantly thinks strategically, operationally, and tactically. He plans each voyage carefully. As it proceeds, he gathers navigational information from a variety of sources, evaluates this information, and determines his ship’s position… Some important elements of successful navigation cannot be acquired from any book or instructor. The science of navigation can be taught, but the art of navigation must be developed from experience.” (2)

With the introduction of electronic navigation aids for the navigator, the basic craftsmanship of navigation has been challenged in a new way. This has partly come from an over-reliance in the systems providing information to improve the SA of the navigator (3, 4). There are several examples and studies of for example ECDISassisted groundings, which are based on an over-reliance in the information being presented on the ECDIS (5).

The most important task of the navigator is to conduct safe navigation, which is done by continuously finding and fixing the position of the vessel to ensure that the vessel is in safe waters.

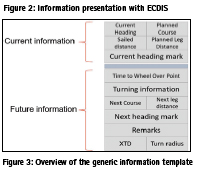

Information management is primarily conducted through the INS on e.g the ECDIS, Radar or Conning application. The presentation of information is not in a common Graphical User Interface (GUI) between Original Equipment Manufacturers (OEMs) of INS, and there are no standardization in the presentation of information GUI. One example of information presentation is shown in Figure 2.

As shown in Figure 2, there is a large amount of information to comprehend for the navigator. Not all information are as relevant for the navigator, and it is thus important for the navigator to sort out the most important information to achieve safe navigation (6).

The Royal Norwegian Navy Naval Academy (RNoNA) has conducted research into what information is imperative for the navigator to collect to conduct a safe littoral passage (7). The primary information is closely connected to the passage plan, and the information of the passage plan is presented in a route monitor window (8). This GUI has been implemented and tested in ECDIS application in operational use, and is shown in Figure 3 (9).

Future concepts for maritime information management

The Royal Norwegian Navy (RNoN) aim for a reduction in the Head Down Time (HDT) for the navigator, in order to facilitate the navigator to conduct safe navigation by continuously controlling the surroundings of the vessel (10). This could be done by utilizing other presentation techniques, facilitating the eye movement of the navigator to the surroundings of the vessel while collecting the most vital information needed for safe navigation. This could also allow the navigator to spend more time on other tasks at hand, helping to achieve and maintain the navigator`s SA.

Technology readiness level

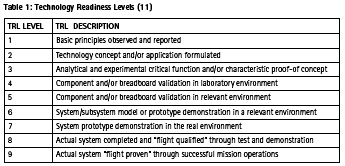

The Technology Readiness Levels (TRLs) are a measurement system that will support the decision maker in the assessment of the maturity of particular technology, and the consistent comparison of the maturity between different types of technology (11). The TRLs is outlined in Table 1. It is an objective for the RNoN to utilize new technology in order to make operations more efficient or safer (12), and TRL should be used as a tool for assessing the maturity levels of the technologies the RNoN are probing, in order to assure a successful implementation. The further aim of developing tools for the navigator to reduce HDT was examined by the use of TRLs. Two different concept has been trialled, the first being Head Up Displays (HUD), and the second Augmented Reality (AR).

Head Up Display

HUD has been used for several years, especially in the aviation industry, providing TRL 9. In the maritime domain it has not been much used (TRL 7), and the RNoN decided to co-operate with a company which already had tested and validated HUD in the maritime domain, providing TRL 8 for HUD in the maritime domain. The device chosen was mounted in a high-resolution display mounted in the frame of the glasses, as shown in Figure 4. Note that there is not a need for a glass in the frame to use them.

The cooperation consisted of implementing the information template in the HUD, an example of one of three interfaces is shown in Figure 5. The interface is aligned with the information needs for the navigator in littoral waters, with reference to Figure 3.

In the display the information depicted in Figure 5 is presented. The preliminary results indicate a potential for reducing HDT, however there are several challenges with the use of HUD in a HMD. One of the major concerns is the refocus issues for the eyes with many and quick refocused from far distance by checking the surroundings of the ships, to a very short distance (3 centimetres) to the display in the HMD. This could be addressed by mounting the HUD in e.g. the windows of the bridge, as other research programs such as Ulstein Bridge Vision has shown (13). It is also identified challenges with the use of HUD during dark hours, where the HUD increase the light pollution that degrades the night vision for the navigator.

Augmented Reality

There has been done some research on Wearable, Immersive Augmented Reality (WIAR) in the maritime domain (14), but there is still a need to examine the specific contribution technology should make in enhancing navigational safety performance and processes (15). The use of Mixed Reality (MR) has shown a good potential, and there has e.g. been developed applications to aid leisure boats with the use of smartphone and MR/AR (16). The use and knowledge of AR has evolved as several larger manufacturers, such as Microsoft and Google, has started releasing commercial products.

AR represents a technology that combines data from the physical world with virtual/abstract data, such as using graphics and audio. The data layer can be dynamically attached to the real world seen through the glasses, for instance a real-time CPA/ TCPA notation attached to a moving ship. You get an extra layer of information that stays anchored to its reference point even if the ship turns or you turn your head. The additional information will typically not replace reality, but expand it in one or more ways. By mixing the navigator`s perception of the real world with graphical and auditory overlays representing key information, navigator`s may be able to reduce the HDT and concentrate focus on handling a situation to a greater extent (increase SA) (17). A hypothersis is also that presenting information attached to an egocentric world view will facilitate decision-making and reduce cognitive load by integrating connected information directly in the field of view (18).

It is critical for navigator`s to maintain SA of what is happening outside of the ship. However, an increasing number of bridge systems and displays force the user to switch rapidly between an outside view and the screens inside. AR technologies could help solve these issues by overlaying the physical world with digital content such as graphics and possibly aiding with audio. Designing AR systems is a new and complex design space as it requires an extensive understanding of the users’ context, and in addition how the technology applies to that context. The understanding of the real-world implications of rapidly shifting contextual factors is essential for designing systems that support operators’ SA (17).

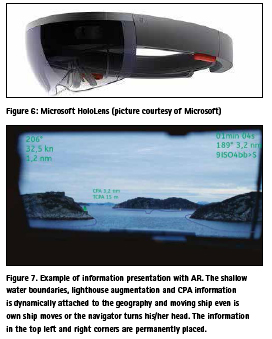

In the Maritime Augmented Reality (M-AR) project, the RNoN cooperates with other partners to investigate the use of AR technology in an operational maritime environment. The aim is to enhance the navigator`s SA by reducing HDT by providing the navigator with augmented information where it is needed. The information template in Figure 3 is used as a baseline, but at the same time AR can provide augmented information regarding the surroundings of the vessel. It is important to note that this information should not only be the reproduction of existing system symbology the augmented way (14). The M-AR project use the Microsoft HoloLens (Figure 6), which has TRL 8 in the gaming domain (19). In the maritime domain, the HoloLens has TRL level 6. The aim of the product is to increase the TRL to level 7 by demonstrating the use of it in an operational environment.

The project is still in an early phase, and a first version is planned late 2018. The content of the information presentation could be as shown in Figure 7.

Figure 7 is a preliminary sketch, and the further plan is for interaction designers to work with the information presentation. Great care need to be taken to design of the new AR symbology not to clutter or hide the world-view. The key points of the use of WIAR, is that it provides the opportunity to present the virtual parts of the world to the user through embedded or superimposed images, technical information, sound or haptic sensory information, which can be linked to other sensor inputs (15). The challenge for the M-AR project is to design and produce a prototype of this template, which is aimed to provide a higher degree of SA for the maritime navigator. Information presentation and information collection can be done in several different ways, and in the use of areas of opportunity has been identified, as shown in Figure 8.

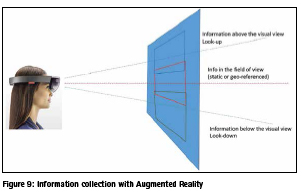

Other areas on the bridge could be utilized is the areas above and under the windows on the bridge, where the navigator could collect selected important information for the passage. This is shown in Figure 9.

When the prototype is operational, valuable experience will be collected to further develop M-AR.

Conclusion

HUD is assessed as a combination of the use of displays in the field of view, which is not optimal. AR technology may offer suitable ways of displaying navigational information, today presented on the INS, which integrates better with the perceptual information on the bridge. This could help reduce the navigator’s cognitive workload while he/she is required to keep constant watch over what is happening outside the window, and thus support safe navigation. However, the TRL of M-AR needs to increase before operationalization, and more research needs to be conducted before deciding on design and which information should be presented in AR.

References

1. IMO. Adoption of the Revised Performance Standards for Integrated Navigation Systems (INS). In: MSC, editor. London: IMO; 2007.

2. Bowditch N. The American Practical Navigator. An Epitome of Navigation Originally by Nathaniel Bowdich (1802): Defense Mapping Agency; 1995.

3. Lützhöft MH, Dekker SW. On your watch: automation on the bridge. The Journal of Navigation. 2002;55(1):83-96.

4. Lützhöft M. “The technology is great when it works”: Maritime Technology and Human Integration on the Ship’s Bridge: Linköping University Electronic Press; 2004.

5. MAIB. Ecdis-assisted grounding MARS Report 200930. London: Marine Accident Investigation Branch; 2008.

6. Porathe T. Ship navigation- Information integration in the maritime domain. Information Design: Research and Practice. 2017.

7. Hareide OS, Mjelde FV, Glomsvoll O, Ostnes R, editors. Developing a High-Speed Craft Route Monitor Window. International Conference on Augmented Cognition; 2017: Springer.

8. Hareide OS, Ostnes R. Scan Pattern for the Maritime Navigator. Transnav. 2017;11(1):39-47.

9. Hareide OS, Ostnes R, editors. Validation of a Maritime Usability Study with Eye Tracking Data2018; Cham: Springer International Publishing.

10. Hareide OS. Improving Passage Information Management for the Modern Navigator. In: IALA, editor. 19th IALA Conference 2018; Incheon, South-Korea: IALA; 2018.

11. Mankins JC. Technology readiness levels. White Paper, April. 1995;6.

12. Sjøforsvarsstaben. Sjøforsvarets Strategiske Konsept, 2016- 2040. Bergen2014.

13. Ulstein. Ulstein Bridge Vision ulstein. com2016 [Available from: https:// ulstein.com/innovations/bridge-vision.

14. Procee S, Borst C, van Paassen M, Mulder M, Bertram V. Toward Functional Augmented Reality in Marine Navigation: A Cognitive Work Analysis. 2017.

15. Grabowski M. Research on wearable, immersive augmented reality (wiar) adoption in maritime navigation. The Journal of Navigation. 2015;68(3):453-64.

16. Porathe T, Ekskog J. Egocentric Leisure Boat Navigation in a Smartphone-based Augmented Reality Application. The Journal of Navigation. 2018:1-13.

17. Frydenberg S, Nordby K, Eikenes J. Exploring designs of augmented reality systems for ship bridges in arctic waters. Human Factors. 2018;26:27.

18. Porathe T. 3-D nautical charts and safe navigation: Institutionen för Innovation, Design och Produktutveckling; 2006.

19. Von Itzstein GS, Billinghurst M, Smith RT, Thomas BH. Augmented Reality Entertainment: Taking Gaming Out of the Box. Encyclopedia of Computer Graphics and Games: Springer; 2017. p. 1-9.

(3 votes, average: 4.67 out of 5)

(3 votes, average: 4.67 out of 5)

Leave your response!